This is the standard efficient algorithm for computing gradients in an MLP.

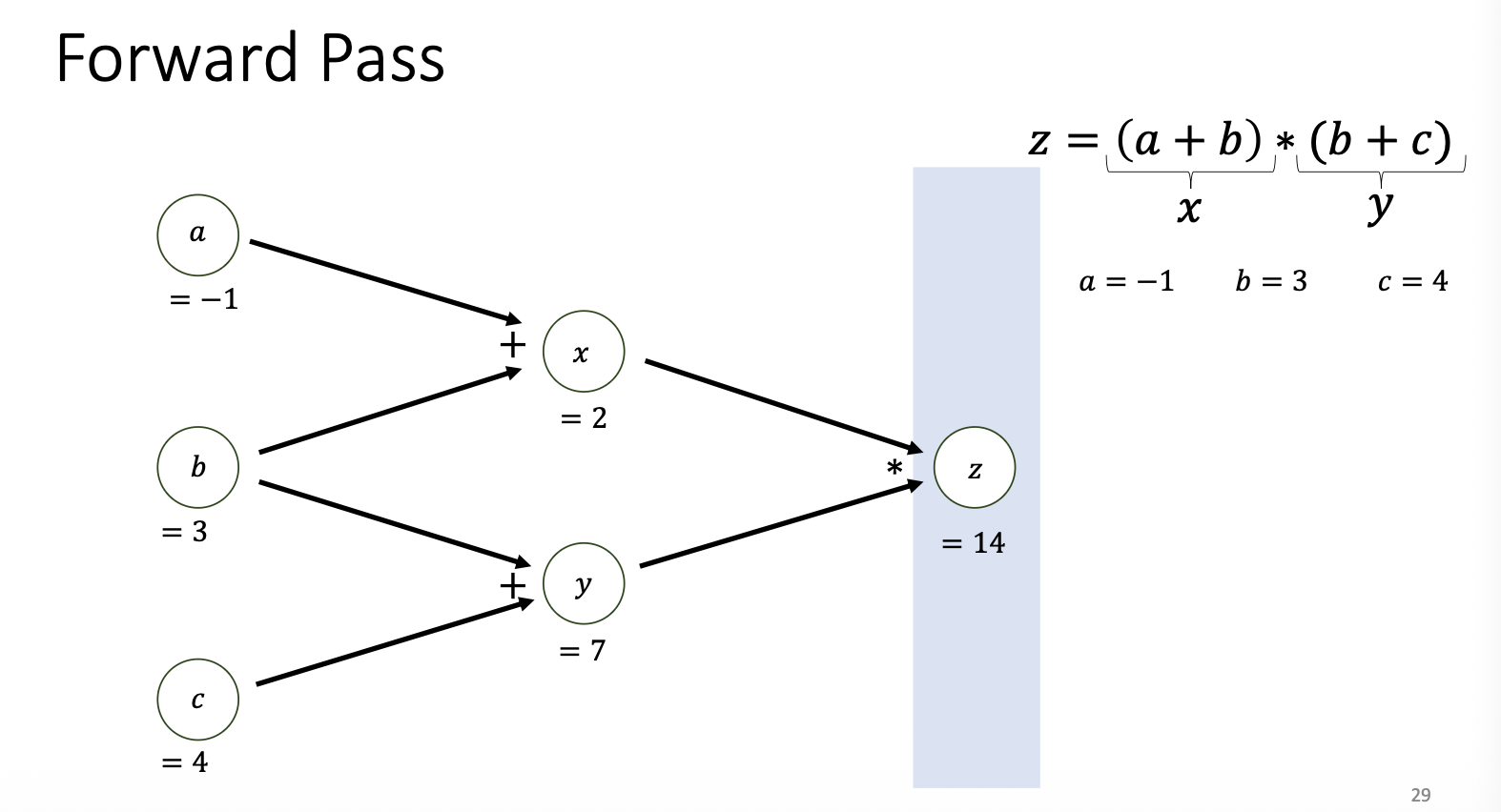

- Forward Pass: Move forward through the graph to compute all intermediate results and the final loss.

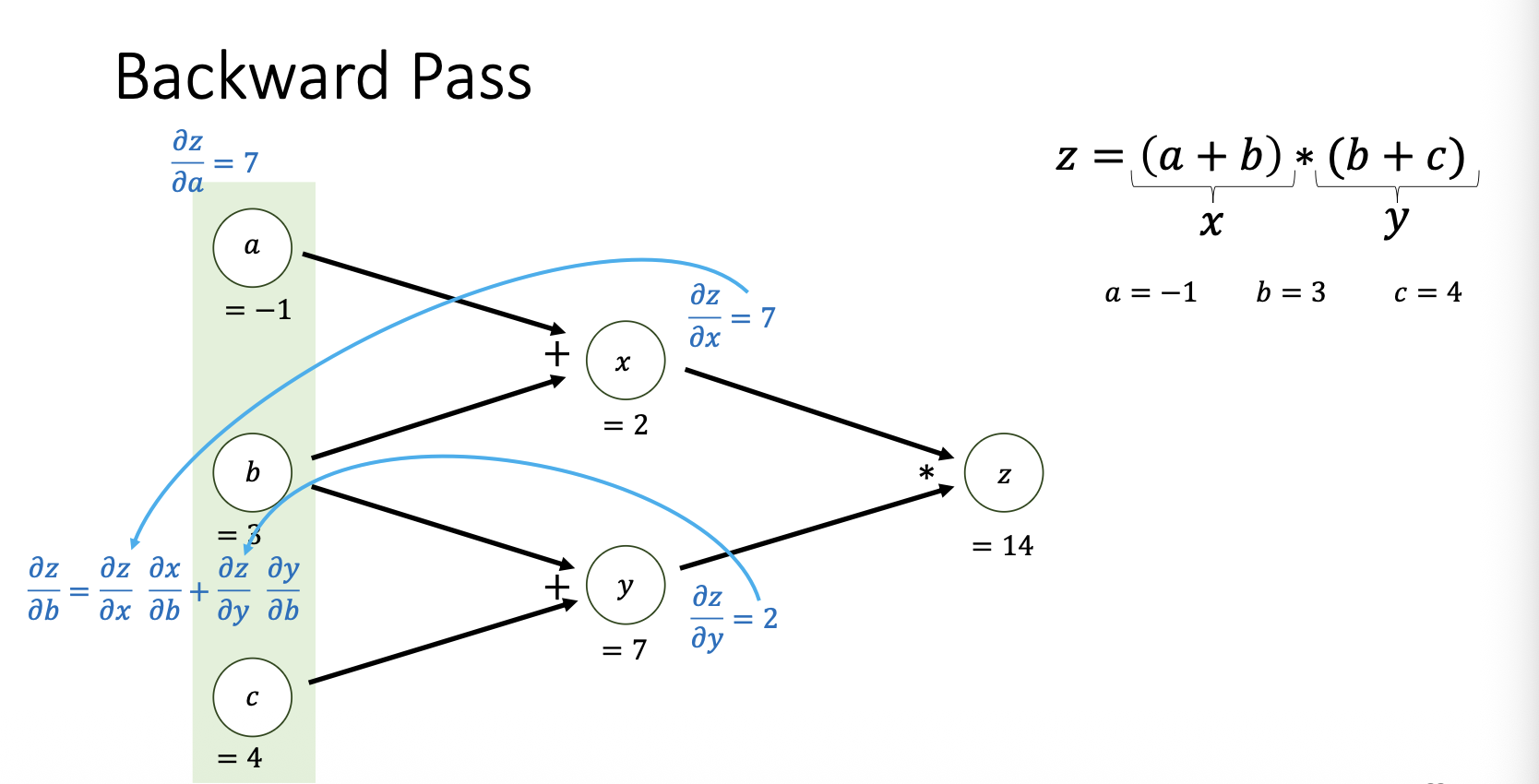

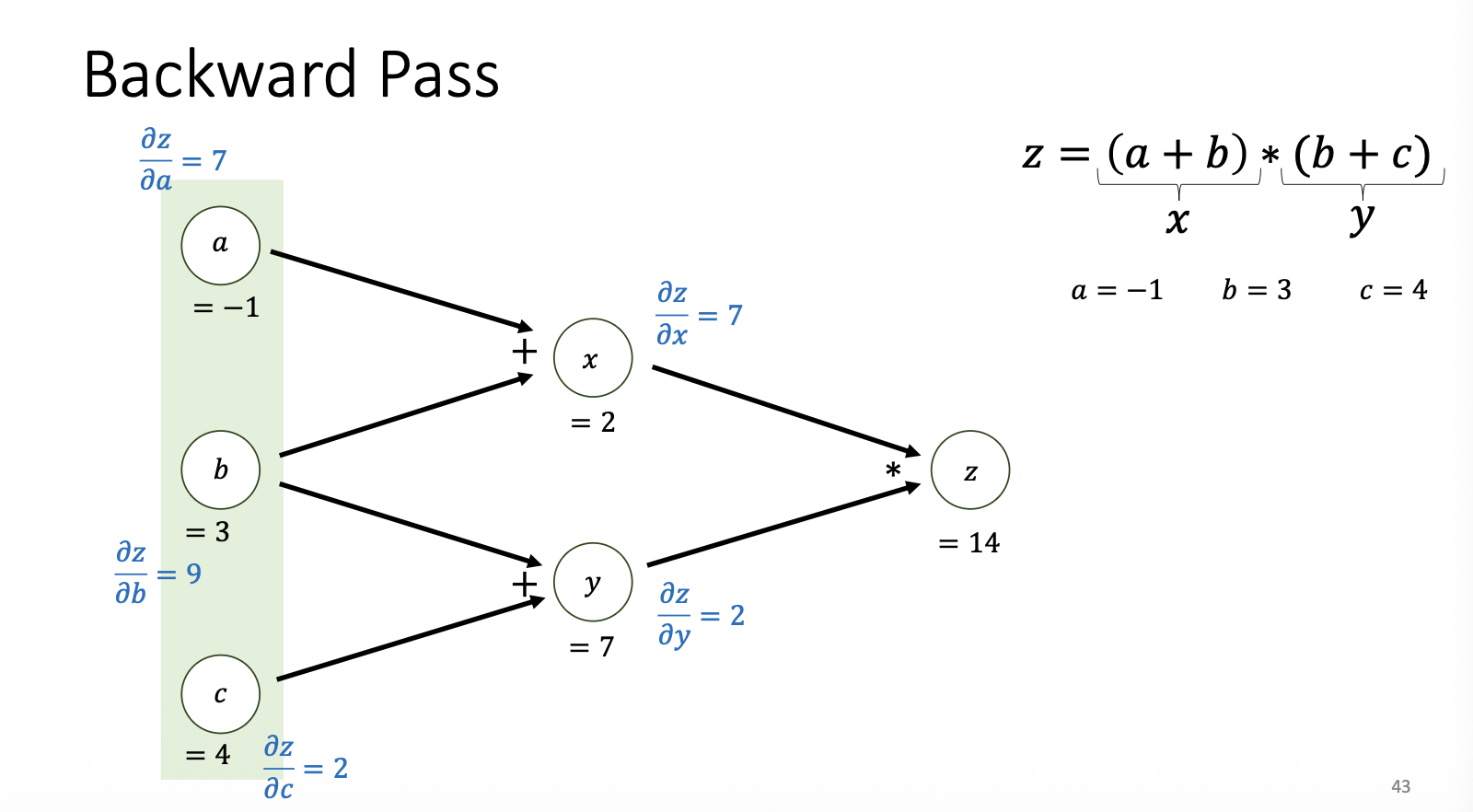

- Backward Pass: Move backward through the graph to compute gradients (partial derivatives) for every parameter, using from successor nodes.

Example